Problem

This project sat inside a broader PLG initiative I was leading at LeadGenius — layering self-serve growth motions onto an existing sales-led model. The upstream work (onboarding and activation) was already shipping results. Users were signing up, activating, and reaching their first aha moment faster than ever.

But activation alone doesn't drive retention or revenue. The growth loop depended on what happened next: campaign creation. Every campaign meant more data consumed, more value received, and a stronger case for renewal. Campaign volume was the leading indicator for retention and expansion.

The metric at risk: only 5% of teams could complete campaign setup without help.

I started in Heap, not Figma. Mapping the full creation funnel — every step, every drop-off, segmented by role and account size — surfaced two distinct failure patterns:

Individual users lost momentum progressively. No single step killed them — each unfamiliar screen eroded confidence until they abandoned. They were accumulating confusion.

Team accounts completed the flow at higher rates, but their campaigns were frequently paused or deleted post-launch. Misconfigured enrichment rules, mismatched targeting, broken sequences. The flow let people finish — but not finish correctly.

Hotjar session replays confirmed it: users hovering over labels they didn't understand, rage-clicking between steps, abandoning at configuration screens they weren't ready for. I partnered with sales to observe customers creating campaigns live — real-time sessions where users narrated their thinking.

“"I could tell it was powerful, but I didn't know where to start."”

“"I need to trust that my team's campaigns are set up right without checking every one myself."”

What changed my understanding

- The flow was collapsing three users into one. An IC creating a quick campaign, a team lead coordinating shared efforts, and an admin setting organisational guardrails — all forced through the same undifferentiated path. Each had different needs, different risks, and a different definition of "done."

- Completion ≠ success. Team accounts had decent completion rates but high post-launch failure rates. The flow was optimised for finishing, not for finishing correctly.

- The product was asking decisions before giving context. Users encountered enrichment rules, data provider selections, and targeting logic before they had any framework for evaluating those choices.

Approach

Constraints

- Engineering capacity was shared. The same team supported the onboarding workstream. I had to sequence the rollout to avoid blocking both initiatives — which meant shipping the full role-based system incrementally, not all at once.

- The sales-led model couldn't break. Enterprise accounts still needed the high-touch path. Every self-serve change had to coexist with existing AM-assisted workflows.

- No dedicated research team. I ran research myself — Heap analysis, Hotjar reviews, live sessions with sales — while simultaneously designing. Speed mattered because campaign abandonment was actively costing renewals every month.

Strategy

One principle guided every design decision: optimise for confidence, not speed.

The data had already shown that a faster flow didn't produce better outcomes — it produced faster abandonment or misconfigured campaigns. Users needed to understand what they were building, trust the choices they were making, and feel progressively more capable as they moved through the flow.

This shaped the information architecture (sequential wizard over parallel forms), the interaction model (progressive disclosure by role), the copy (inline guidance replacing tooltips), and the rollout sequencing (admin-first to establish governance before opening IC and lead flows).

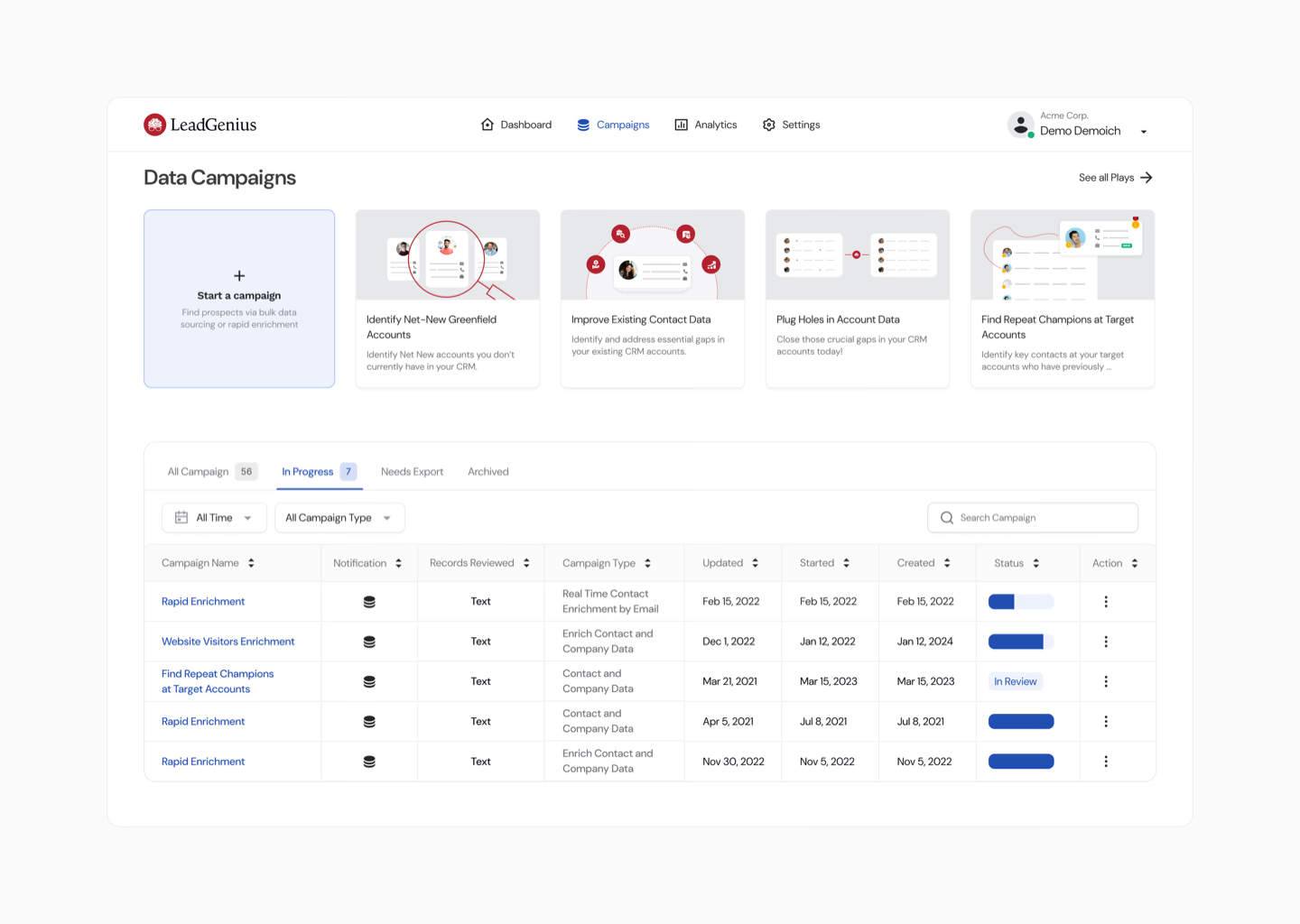

Experiment 1: The Guided Wizard

If we replace disconnected form pages with a role-aware, step-by-step wizard, campaign completion will increase and misconfiguration will decrease — because users will only see decisions relevant to their role and will build confidence progressively.

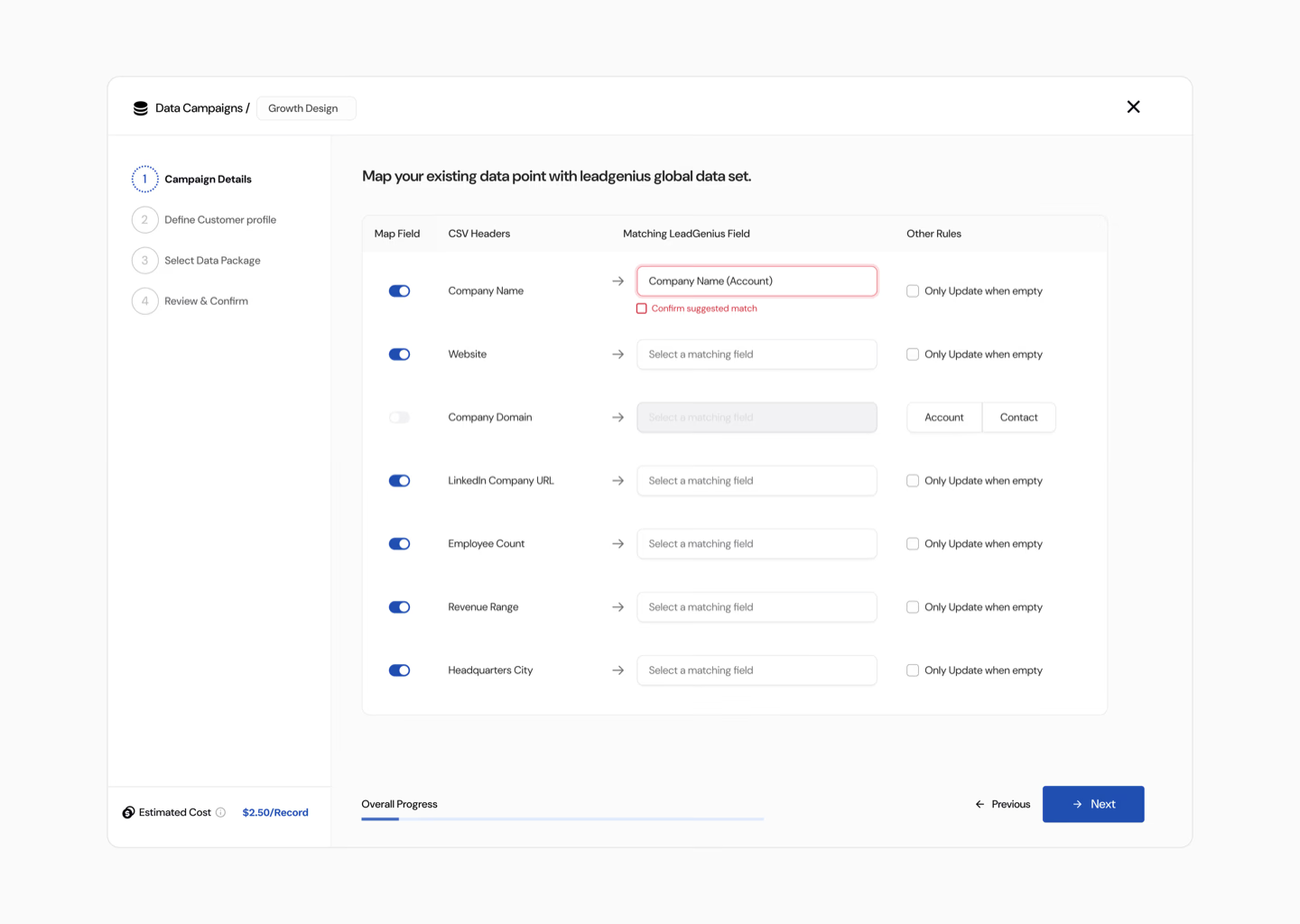

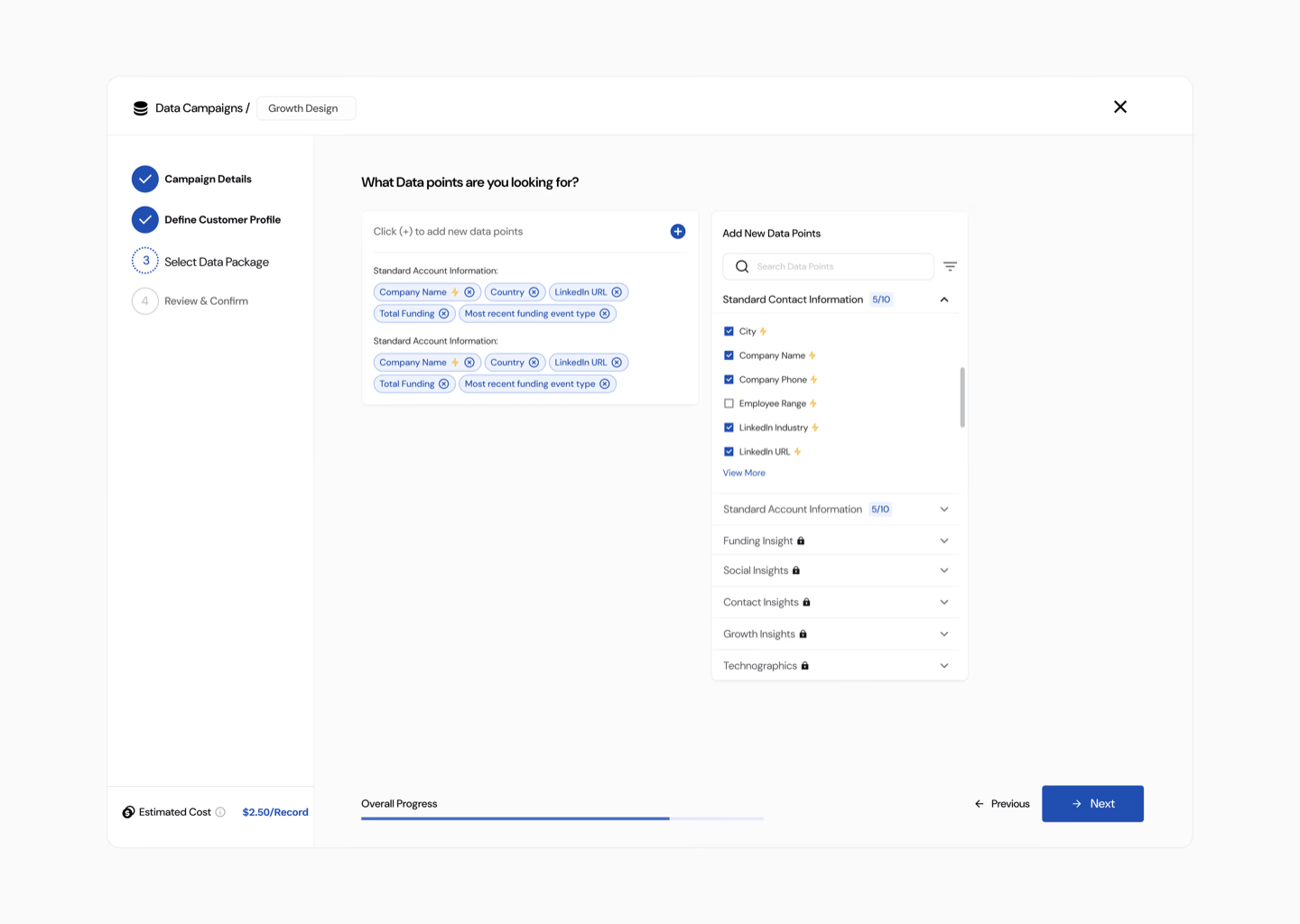

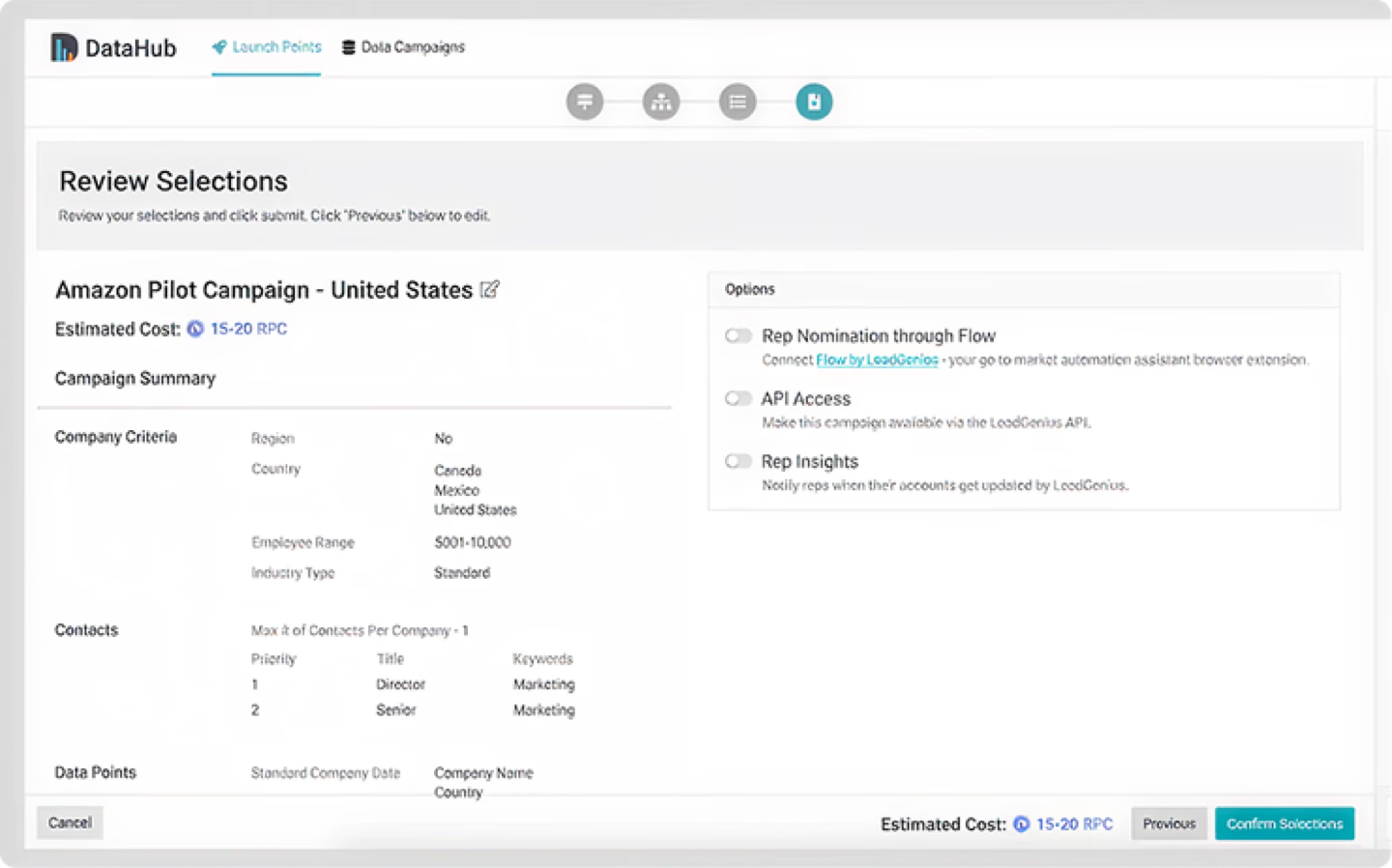

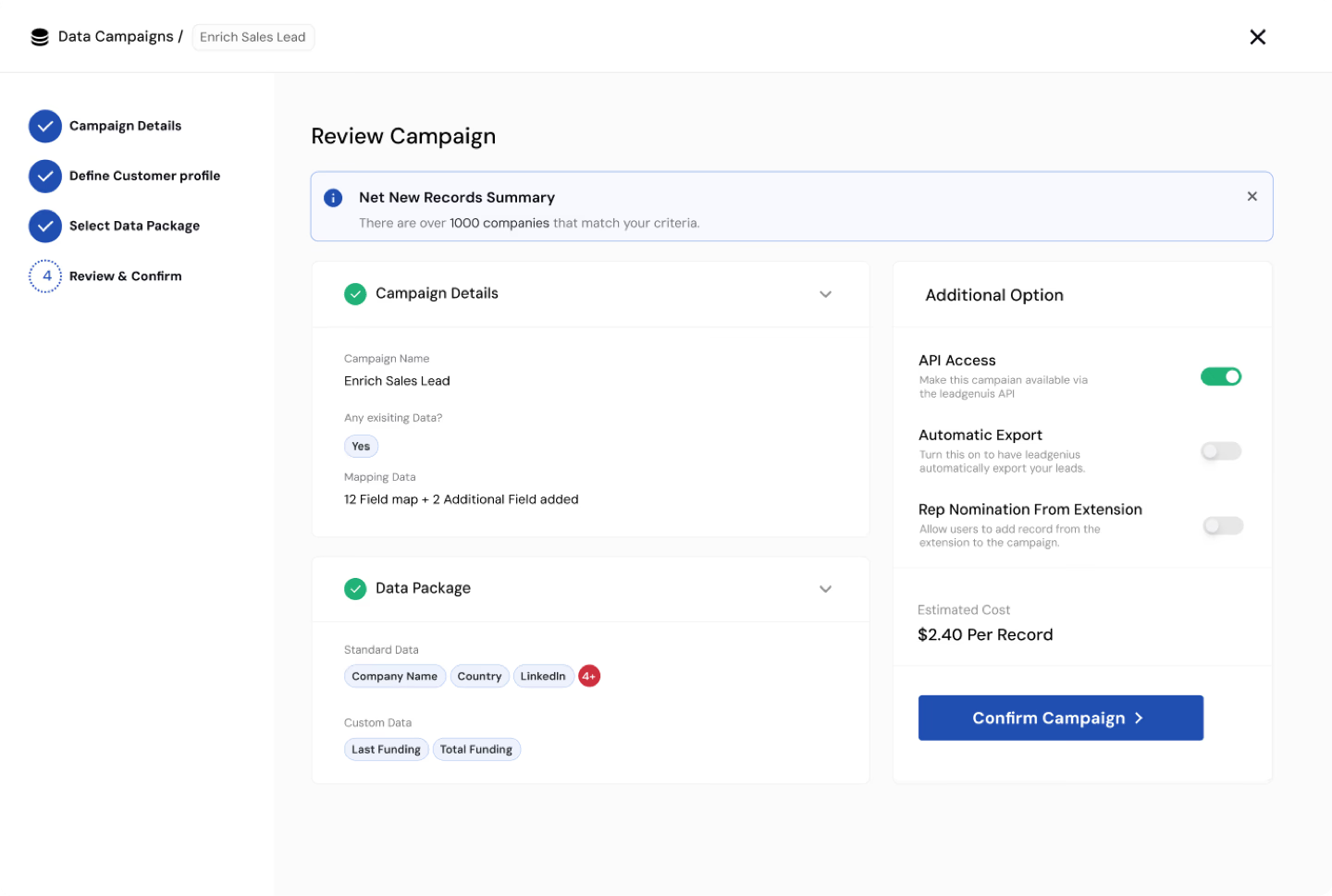

From scattered forms to a guided wizard. Each screen built on the previous one, reframing creation from "fill in a complex form" to "answer a sequence of clear questions." Progress was visible at every step.

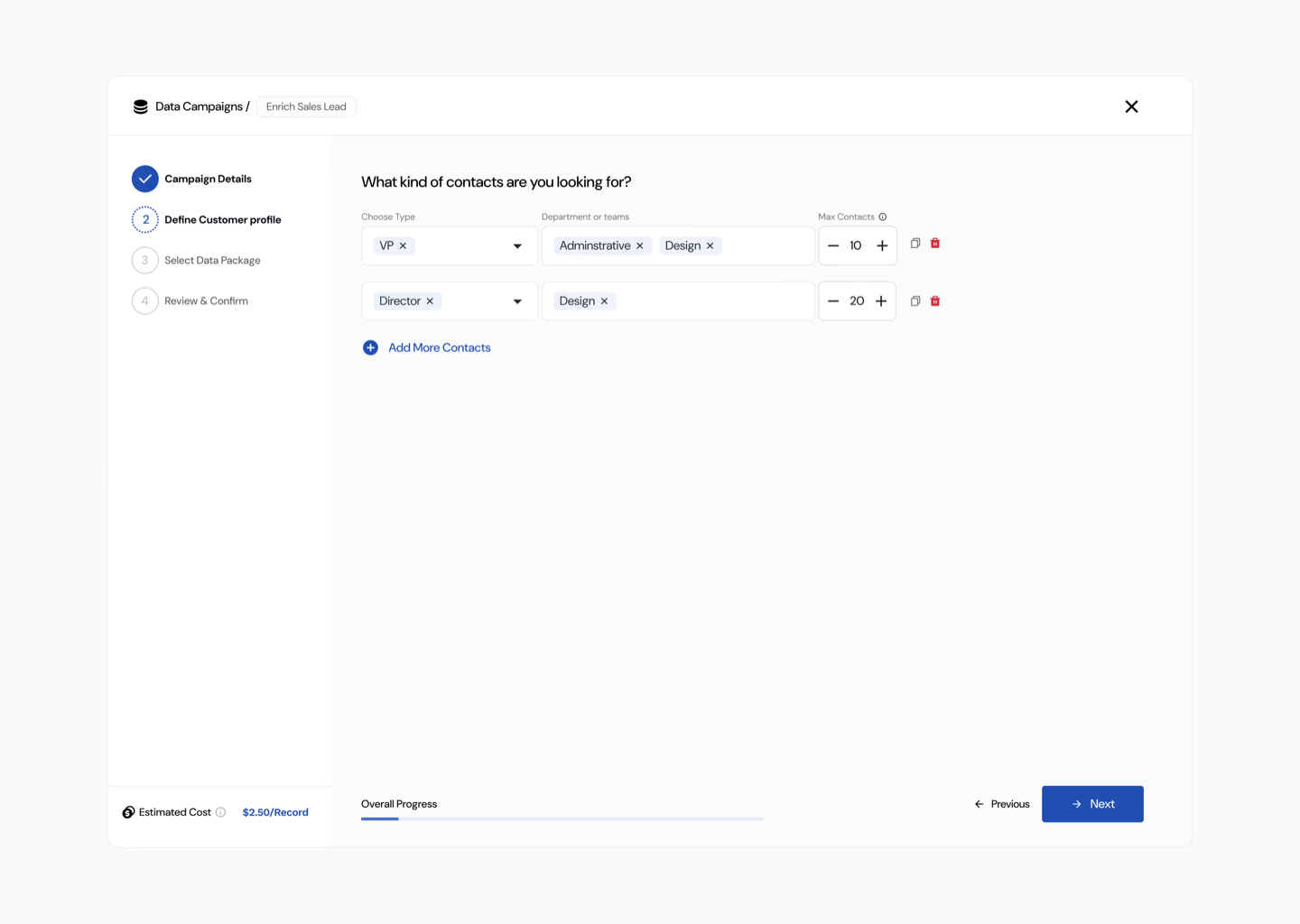

Progressive disclosure across roles. The wizard adapted based on who was using it. An IC saw a streamlined path: audience → sequence → launch. A team lead saw assignment and review steps. An admin saw template creation and permission controls. Same system — different surfaces.

Admin-first rollout sequencing. I made a deliberate call with engineering to ship the admin experience first. Internal testing surfaced a critical dependency: admins needed to define campaign rules, enrichment guardrails, and team permissions before the governed IC and lead flows could work properly.

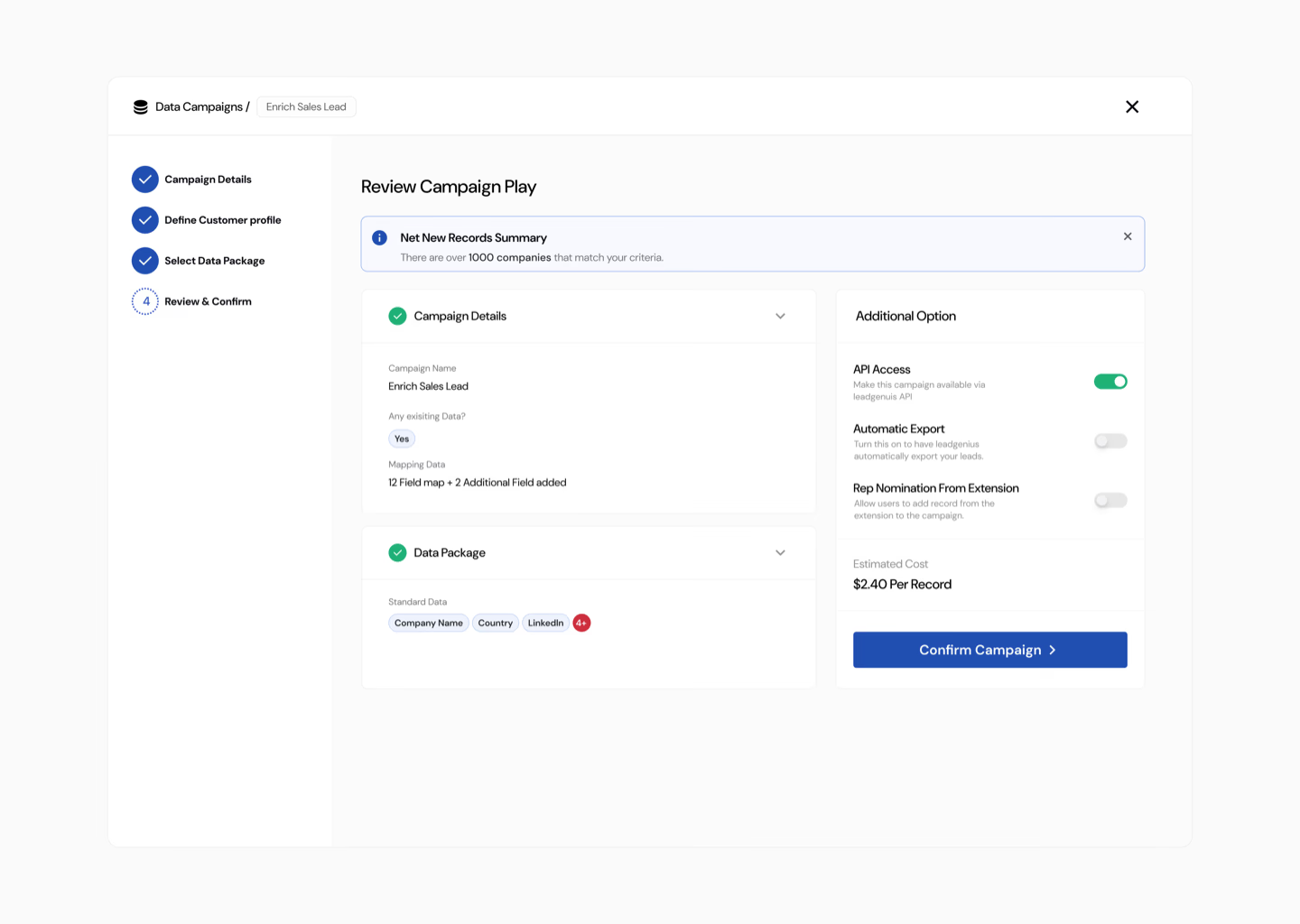

Confidence before launch. A summary review screen let users see every configuration choice in one view and edit inline before committing. For teams, this doubled as the approval surface. I replaced tooltips with inline guidance and added real-time validation that caught conflicts before launch.

We tested a stripped-down 3-step flow against the guided wizard. The short flow had lower early drop-off — but the wizard had significantly higher completion and 7-day retention. Users who understood what they built came back. Users who were rushed through didn't. The shortest path to creation wasn't the shortest path to value.

Experiment 2: Plays (Campaign Templates)

With the wizard shipping results, I stayed close to the engagement data. A pattern emerged within weeks: users were duplicating existing campaigns and making small adjustments — same criteria, slightly different data points. They'd invented their own shortcut to avoid blank-canvas creation. The sales team flagged it independently.

If we offer pre-configured templates mapped to common use cases, users will create campaigns faster and more frequently — increasing engagement volume and reducing remaining creation friction.

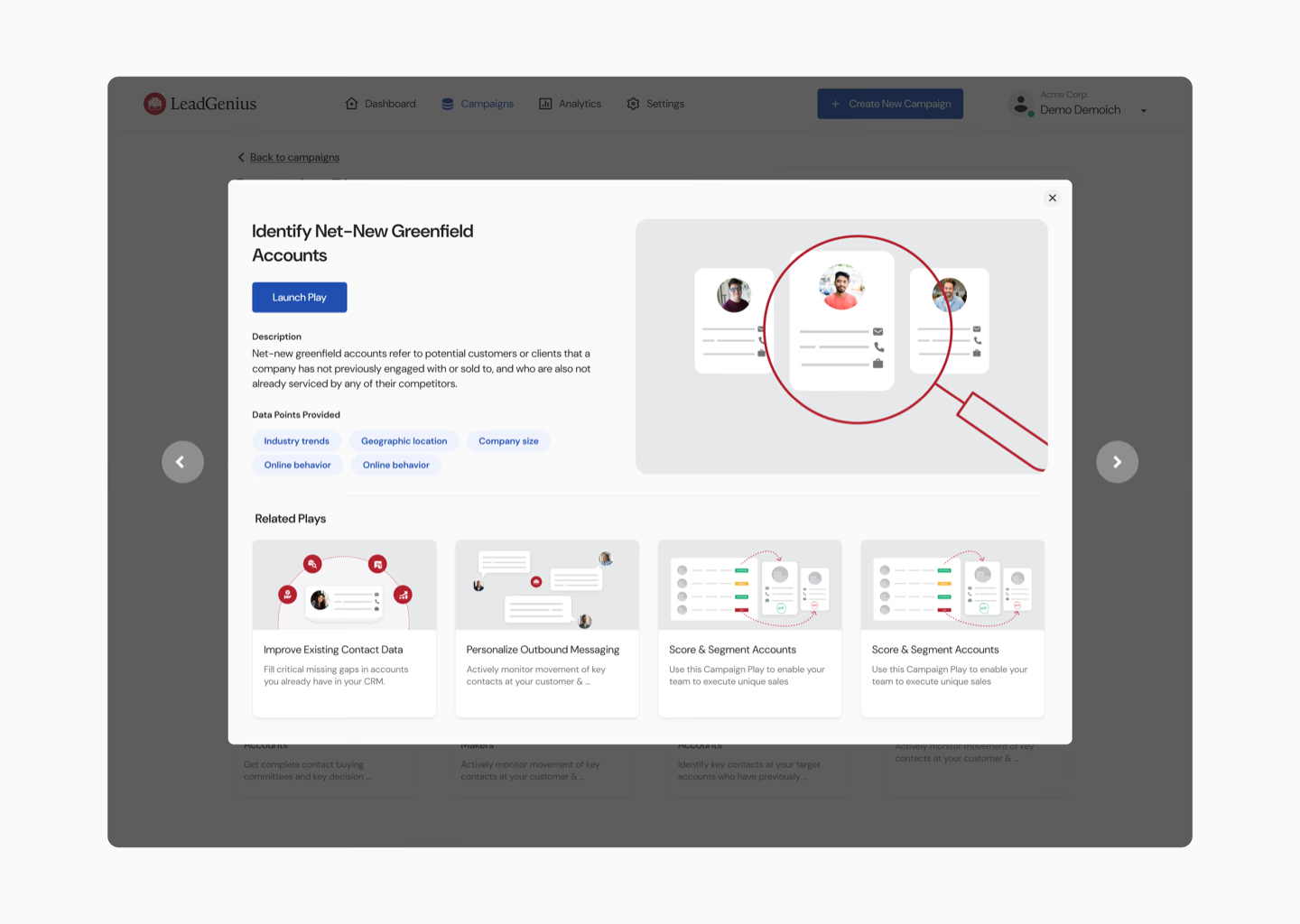

Working with sales, I mapped the most common campaign patterns to specific business use cases and created Plays — templated starting points like "Find decision-makers at target accounts" or "Enrich and verify a prospect list." Each Play came with sensible defaults, cutting a five-step wizard down to two steps. For teams, admins could create custom Plays enforcing their organisation's data rules.

I tested two placements — Plays on the dashboard homepage vs. Plays on the campaign page. Dashboard: 10% of Plays launched. Campaign page: 50% of Plays launched. Users were ready to act when already in campaign mode. We shipped the campaign page placement.

Results

In post-ship usability sessions, users described the wizard as "logical" and "obvious" — language that never appeared in pre-redesign feedback. Team leads reported trusting their reps' campaigns without needing to review every one. The hesitation and rage-clicking visible in earlier Hotjar replays disappeared in post-launch recordings. Sales reported that customers using Plays described campaign creation as "easy" for the first time.

Layered Impact

Campaign creation went from a source of confusion to a self-serve capability mid-market teams could complete independently.

The growth loop became functional: more campaigns → more data consumed → more value delivered → stronger retention → expansion revenue. PQLs generated through self-serve behaviour fed directly back to the sales team.

Reflection

I'd instrument the wizard for step-level analytics from day one. We had funnel-level data in Heap, but granular step timing would have let us optimise individual screens faster. I'd also explore personalising the wizard's defaults based on industry or company size — we treated all ICs the same, but their use cases varied more than the initial segmentation captured.

Growth design principles from this work

Measure the loop, not the feature. Campaign completion in isolation was a vanity metric. What mattered was whether completed campaigns led to retained users who created more campaigns. Every design decision was evaluated against the full loop, not just the immediate step.

Ship the insight, not just the interface. Plays didn't come from a roadmap. They came from watching user behaviour after launch and recognising that the next experiment was already hiding in the data.